What is Trustworthy Artificial Intelligence?

I recently attended the SAS INNOVATE conference, which introduced a new concept that I haven’t heard of before. In particular, a presentation made by Eric Yu, Principal Solutions Architect within the Data Ethics Practice, caught my attention. For those of you who don’t know, SAS has been delivering Artificial Intelligence and Machine Based Learning solutions for more than 20 years, and is one of the premier global analytics companies worldwide. SAS was founded by Dr. Jim Goodnight, formerly a statistics professor at NC State University.

Eric discussed the concept of “Trusted AI” – a topic I hadn’t heard before, but which is increasingly garnering headlines. Most recently, Henry Kissinger described AI as the new frontier of arms control during a forum at Washington National Cathedral on Nov. 16. If leading powers don’t find ways to limit AI’s reach, he said, “it is simply a mad race for some catastrophe.” The former secretary of state cautioned that AI systems could transform warfare just as they have chess or other games of strategy — because they are capable of making moves that no human would consider but that have devastatingly effective consequences.

This is true not just in warfare, but also in supply chains. As we move towards a digital future where we increasingly will be ceding control to machines who call the shots, not humans, what are the risks of doing so? Increasingly, more and more data is being stuffed into the cloud, which certainly allows us access to more readily access reams of data which can be processed by algorithms for decision-making. We have to be able to trust these algorithms to make the right decisions. But what does “Trustworthy AI” actually mean?

First, it is important to note that 90% of most AI algorithms don’t ever make it to production. It just isn’t all that easy to create machine based learning algorithms that work as they’re supposed to – it often requires building thousands of models to scale up and mimic human decision-making. AI and robots still cannot drive a car reliably, or mimic the actions of a warehouse worker in picking up objects and packing them into boxes and containers.

Eric pointed out that to be successful at building trustworthy AI, several things are needed:

- You need a partner with experience. SAS meets that criteria – having built and created thousands of AI projects over the last 20 years, and a library of optimization and simulation algorithms that can’t be replicated by anyone.

- You need a persistent strategy for digitization. Your organization has to be all in – and be willing to dedicate the resources to execute a persistent data strategy. You won’t be successful on the first go, so be ready to work at it.

- Be prepared to scale decision making capabilities. “Impossible” is an opinion. To solve big problems, you have to be willing to take on something big.

Trustworthy AI involves recognizing first that AI is essentially probabilistic decision-making, incorporated into an expert system. Building trustworthy AI involves the continuous cycle of managing data, developing models, and deploying the insights. This sounds fairly straightforward, right? Guess again.

For one thing, get ready for increased regulation. The US GAO issued a report declaring a lack of consensus on how to define and operationalize AI, issuing an Accountability Framework that helps to address principles around governance, data, performing and monitoring. The White House as also issued the AI Bill of Rights – more of an aspirational document than anything else. However, it points out that AI decisions, based on available data only, can be flawed, especially hiring and credit decisions have been found to reflect and reproduce existing unwanted inequities or embed new harmful bias and discrimination.

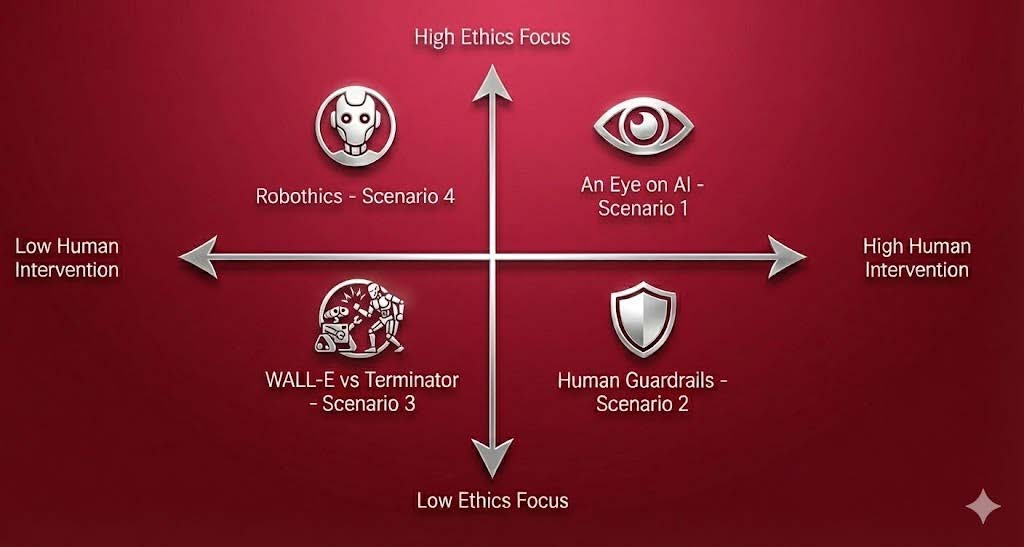

But driving towards AI standards to increase trustworthiness is easier said than done. The UK has also begun pursuing this goal, as has the EU, who are likely to explicitly define AI and how to use it. Eric pointed out that Trustworthy AI requires a comprehensive approach, which spans the entire organization. Three primary elements determine the fiduciary responsibility for trustworthy AI: Duty of Care, the Business Judgement rule, and Duty of Compliance Oversight. These pillars are required to understand the historical biases that so often find their way into AI algorithms, which have created historical injustices and inequities. But bias is not always a bad thing – at times, context plays a critical role in human decision-making – and so introducing the right kinds of biases into AI is important to enable it to be trustworthy.

None of these issues can be solved right away. The big takeaways for me is that AI inherently has risks and rewards, and we need to be able to understand the right applications for employing AI that balance these factors. Companies also need to prepare for increased regulations and begin to enact their own internal principles and guidelines for adopting AI within their own organization, or perhaps pre-empt or influence these regulations. And finally, building trustworthy AI requires a comprehensive approach that spans the entire organization. It’s time to start thinking that way.