The Internet of Things: Does more data really make us more intelligent?

Recent articles, posts, and future trends studies are all awash with excitement about the Internet of Things. For instance, Mike Morley writes on the Material Handling and Logistics site that the Internet of Things will change everything:

“Billions of connected devices associated with global supply chains will transform the amount of information that can track shipments in real time. Digital information coming from these connected devices will drive increased levels of pervasive shipment visibility and this in turn will allow organizations to move towards more intelligent value chains.”

“Big Data” fans truly believe that mounds and mounds of data will be totally awesome, dude. All that all we need to worry about is how to capture this data. For example, Morley notes that:

“The key challenge moving forward is how companies capture and analyze a whole host of critical information from a variety of products, appliances and equipment and then communicate status updates and information as we approach the next phase in the evolution of the Internet.”

Given that I have a degree in statistics and operations management, believe me – I am a huge fan of having data. However, I am also a big fan of something called the “scientific method” – which means you can’t start digging into data unless you have a really good idea about what it is you hope to find in this pile of gibberish! I have been doing a lot of work recently in the area of supply chain intelligence and risk management… one of my favorite areas of discussion (if you haven’t noticed!) However, there seems to be some confusion in the press in relating intelligence to data collected from objects. What is the difference between intelligence and data?

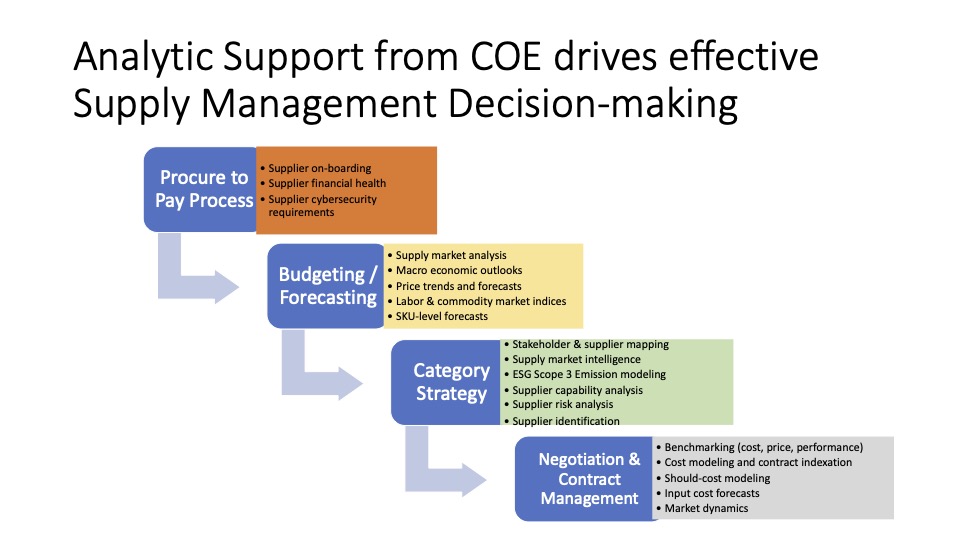

It seems that there is a a whole school of thought that says that “If we have big enough data sets we will be able to locate non-obvious correlations because we have so many tests available to us.” That is the whole sales pitch in a nutshell. And lots of people are selling the idea of searching for non-obvious correlations, and making plenty of dollars in the process. However, searching for non-obvious correlations will never help identify the root cause of whatever it is you’re trying to solve. In fact, there is a whole set of tools and approaches called the Structured Analytic Methods that are used to derive and build the hypotheses that drive you to look for relationships in the data to address whatever it is you are trying to solve. These approaches have to do with the way that people think, how they elicit information, how to explore and arrive at what a root cause might be. This is a whole different capability, that has nothing to do with buying enough machines, RFID tags, or benchmarking dashboards.

It is interesting that one of the major consulting firms in DC is offering “big data” capabilities to construct dashboards that provide real-time data. But if you look behind the curtain at this organization, it involves human curation. There are human beings who are doing the data crunching, analysis, and production of the dashboards. They are buying large datasets, and working through them manually to cleanse, organize, and perform statistical analysis to derive performance measures.

Supply chain intelligence and all the other stuff has trended towards the thinking that big data will solve all our problems. In my opinion, organizations need to be investing more in analysts who understand how to codify and employ standard analytic methods to specific problems using the scientific method and the power of evidence applied to competing hypotheses. There are ways to derive much more intelligent outcomes if you are careful and thoughtful about it…