Data Cleansing as the Foundation for Supply Chain Analytics

Many organizations are focused on driving analytics as a foundation for competitive advantage. Often overlooked in this discussion is the importance of establishing a foundation for analytics through the process of data readiness and data cleansing. The Data Readiness Level (DRL) is a quantitative measure of the value of a piece of data at a given point in a processing flow. It can be envisioned as the data version of the Technology Readiness Level (TRL). The DRL is a rigorous metrics-based assessment of the value of data in various states of readiness. It represents the value of a piece of data to an analyst, analytic, or other process and will change as that data moves through, is interpreted, or changed by that process. The aggregate change in DRL associated with a piece of data from raw input signal to finished product will contribute to the characterization of a given analytic flow in terms of accuracy, efficiency, and other performance metrics.

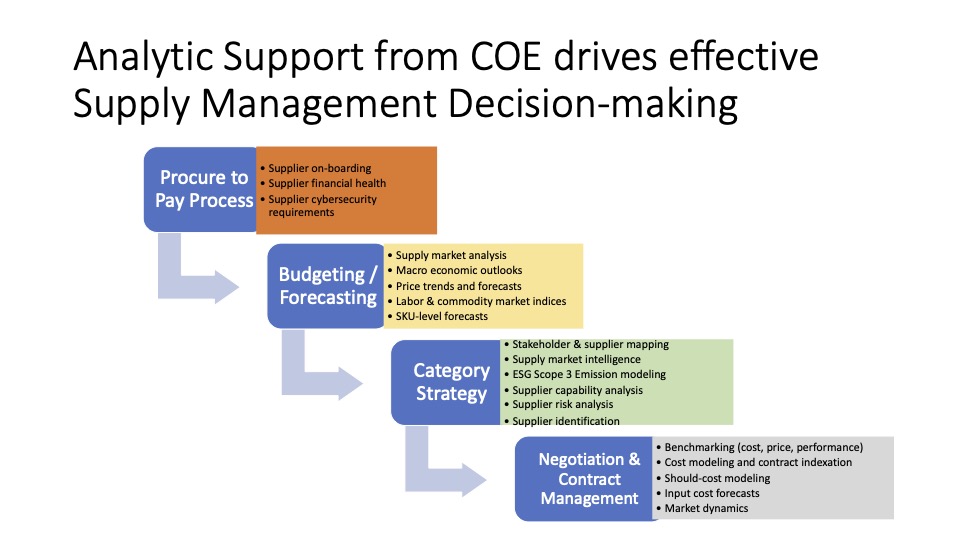

The process of preparing data for intended use, (one way of thinking about Data Readiness) in the context of Supply Chain Management can be defined by five distinct processes that span both software systems, process management, and decision support, (1) Data Cleansing (which includes Data Acquisition, Cleansing, Preparation, and Database Population), (2) Spend Analytics, (3) Contract Management, (4) Technology Applications, and (5) Customer Service. In this discussion, we focus the discussion on Data Cleansing, which is the most time consuming and challenging of the elements. (Once one has a good dataset, the others fall into line more easily!)

Ensuring You Have Good Data

Access to the right data is essential, as accurate and properly coded data provides the foundation for category management strategies, including leveraging, pricing agreements, quantity discounts, value analysis, supply base optimization and other important cost management activities. Data Cleansing is actually a process that involves four stages, as shown in Figure 1.

Data Acquisition

First, the user is contacted and the “raw” data is collected from different sources. Common sources of data can be the customers’ MMIS, GPO and local suppliers. In a broader context, it may include structured and unstructured datasets. It is important at this stage that all relevant data that is of potential interest to the intended target is included in the analysis. Note that many providers restrict their data acquisition to only electronic EDI data, or structured data that is readily available, thereby missing a significant “chunk” of the total spend. The net impact of this oversight is that it provides an inaccurate representation of what the healthcare system is truly spending on third party goods and services

Data Cleansing

Jason Busch notes that from a technical perspective, first generation data cleansing approaches were limited by the underlying architecture, development, analytical and visualization capabilities available to providers at the time. This is still a major problem for many applications. The limits of relational database technology based on disk storage and traditional data warehousing approaches to storing, querying and accessing information and reports are one example of the constraints that are often encountered, due to old technology platforms. New approaches by SAP with their HANA database is providing RAM storage approaches that can materially increase query speeds as well as workarounds to traditional storage and query models that greatly increase both the speed with which we can search and access information as well as the ability to search information sets in the context of each other.

One of the most important foundational shifts in data cleansing technology in the past 18 months has been an interest in greater flexibility and visibility into the classification process. Increasingly, more advanced organizations are starting to look for the ability to classify spend to one or more taxonomies at the same time (e.g., customized UNSPSC and ERP materials code) as well as having the ability to reclassify spend to analyze differ views and cuts of the data based on functional roles and objectives. Moreover, some organizations are looking to exert greater control over the spend visibility process; these individuals are often becoming distrustful of “black box” approaches to gathering and analyzing spend data.

It is important to note here that some providers we spoke with believed that data cleansing is not as valuable as data normalization. The point was made that “normalization does not have to cleans the data to make effective use of the resulting analysis.” We disagree with this point for several reasons. First, if data normalization was acceptable without cleansing, healthcare would not be adopting GS1 standards, to address the issue of manufacturers publishing data with a “warranty” of accuracy. Accurate and clean data is critical for any type of analytics or normalization effort. In this case, if the “garbage” goes in, than the resulting output is more likely to be “garbage” as well!

Next, the data is checked for completeness and accuracy. Access to the right data is essential, as accurate and properly coded data provides the foundation for category management strategies, including leveraging, pricing agreements, quantity discounts, value analysis, supply base optimization and other important cost management activities. Data Cleansing and Enriching involves ensuring that the data has no duplicates, and is organized into a logical structure in a database. This occurs through a process of first comparing the data to existing codes in the database, and determining if there is already a match that comes close to products already stored from other companies. In cases where there are not any matches, then an individual known as a “mapper” will go about the process of cleansing the data item, or creating a new product code for it, along with the appropriate links to the manufacturer ID, the vendor ID, the unit of measure, and other pertinent information about it.

Data Classification/ Normalization

One of the most important foundational shifts in spend analysis technology in the past few years has been an interest in greater flexibility and visibility into the classification process. Increasingly, more advanced organizations are starting to look for the ability to classify spend to one or more taxonomies at the same time (e.g., customized UNSPSC and ERP materials code) as well as having the ability to reclassify spend to analyze differ views and cuts of the data based on functional roles and objectives. Category analysis using data classification codes can also identify areas where “system internal co-sourcing” is taking place. This refers to situations where decisions regarding commodity items as well as physician preference items and actual determination of vendors where there continues to be a duplication of purchasing efforts at both hospital and system levels. This is an expensive proposition, which includes duplication of effort for identifying products and suppliers, developing and managing requests for proposal and information, optimizing proposals and obtaining offers, finalizing awards, and implementing and monitoring contracts. As systems migrate from being holding companies to operating companies, reduction of internal co-sourcing is an important strategic opportunity, but will rely on effective data cleansing and coding as the basis for analysis and action.

Here again, the classification of products using coding systems is commonplace in the industry. Almost all of the providers I reviewed in my benchmark study had systems that would recognize a product and enrich it with the correct manufacturer name and item number, UNSPSC code, and descriptions, before uploading it into an ERP. It appears that DDS has this capability, as does Primrose.

An automated process augmented with a manual process is the current standard in the industry that increases efficiency and accuracy. Customer service is important in this stage, as involving personnel with the expertise, such as checking with subject matter experts to manage data is one of the key check-points. Further, proper coding of the data will require engaging subject matter experts to truly make sense of the data. This is necessary to arrive at strategic sourcing decisions that will be effective. In this regard, a third party should be willing to provide the level of consulting and coordination that is consistent with the level of effort required to perform a thorough spend management project.

It is also important to emphasize that there are many different ways of coding a product, but software providers tend to use the same standards, which include UNSPSC or GS1 industry standard nomenclature systems. This is discussed later in more detail.

Database Population

Finally, the coded dataset is uploaded into the requisite application. Once uploaded, the real power of the data can be leveraged through merging with other data forms for benchmarking and cross-reference analysis. Application and data integration paradigms have already shifted in a number of non-healthcare applications from one of batch uploads from multiple source systems to real-time data queries that can search hundreds (or more) disparate sources while normalizing, classifying and cleansing information at the point of query. In the coming years, Oracle, IBM and D&B, all of which have purchased customer data integration (CDI) vendors, could begin to apply these techniques to spend and supplier information as well. CDI technology actually improves the integrity of the data from individual sources, allowing users to match and link disparate information sources with varying levels of accuracy. CDI tools can correct for data-entry mistakes, such as misspellings, across different data sources to provide an accurate picture. Here again, the ability to accurately match UNSPSC codes to items is dependent on the accuracy and transparency of the original dataset.